Human in the Loop, or Bottleneck in Disguise?

Over the holidays, I read David Chandler’s The Campaigns of Napoleon, a voluminous account of the Italian-French general’s professional career. The main takeaway from the book is what everyone knows about Napoleon: He was a military genius.

For example, upon seeing a ground, Napoleon could work out how a battle would unfold, including formulating contingency plans, in ways that other commanders could not. That skill came from a combination of extensive study and superior intellect.

However, because Napoleon was an objectively superior military decision-maker, he developed a leadership style that relied on micromanagement and rescuing his subordinates when they ran into trouble. This style worked when he led smaller armies in smaller geographies, but it faltered as he rose, most notably in the Russian campaign of 1812.

Chandler writes: “[H]is senior generals were for the most part as good fighting soldiers as ever, but never having been permitted any real initiative by their master, many were to find themselves frequently at a loss when acting on their own. Napoleon, of course, could not be everywhere at once in so vast a terrain as Russia, and so, as we shall see, his strategic schemes broke down repeatedly as his subordinates misunderstood or bungled their tasks.”

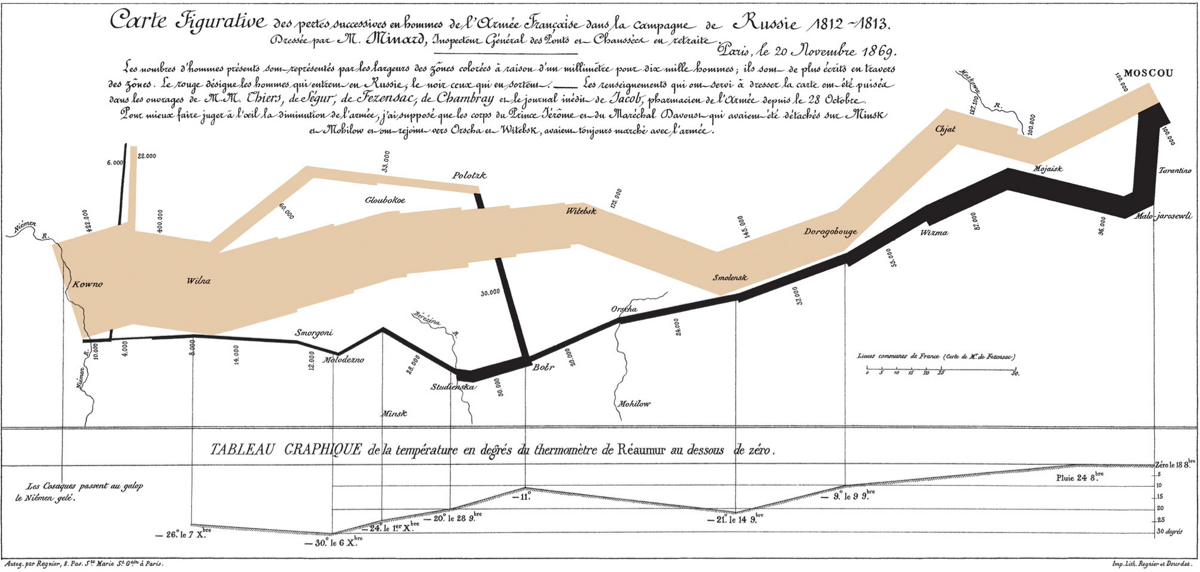

Charles Minard’s 1869 map of Napoleon’s Russian campaign tells the story. The brown line traces the advance toward Moscow, with its width representing troop strength, and the black line traces the retreat. While France’s Grand Armée marched on Russia with an estimated force of over 500,000, it returned to France with well less than 100,000.

“The problems of space, time and distance proved too great for even one of the greatest military minds that has ever existed,” writes Chandler, and the subordinates Napoleon had never trusted to lead were left without the capacity to do so.

Military micromanagement wasn’t unique to Napoleon’s era. H.R. McMaster (the future general and national security advisor) describes a particularly stark example of this dynamic from the Vietnam War in Dereliction of Duty. “Washington retained the authority to cancel missions due to poor weather….” The problem, of course, was that “the weather changed faster than people in Vietnam could inform Washington of those changes.”

These two examples point to the same structural failure: centralized control becomes a bottleneck in dynamic, distributed environments. The decision-maker can only process so much information before the system breaks down.

This is worth sitting with as we think about applying our management approaches to new technologies. The prevailing posture for working with AI is “human in the loop,” the idea that a person should review and approve key decisions before they’re executed. For quality control, this sounds completely reasonable. But if we treat the AI agents we develop the way we’d treat talented employees, we risk turning “human in the loop” into a form of micromanagement, making us the bottleneck.

The answer isn’t to abandon oversight of AI agents. Rather, it’s about identifying those decisions that are consequential enough to require it, even at the cost of speed. It also means honestly appraising where we’re truly the expert—the Napoleon of our domains—and where we hold onto decisions simply out of habit or fear of giving up control.

It’s critical because, as with delegating to a human, once we’re willing to give up control, we can start working on making the agent an effective decision-maker—one we can trust.